kubernetes作为云原生不可或缺的重要组件,在编排调度系统中一统天下,成为事实上的标准,如果kubernetes出现问题,那将是灾难性的。而我们在容器化推进的过程中,就遇到了很多有意思的故障,今天我们就来分析一个“雪崩”的案例。

问题描述

第一次故障

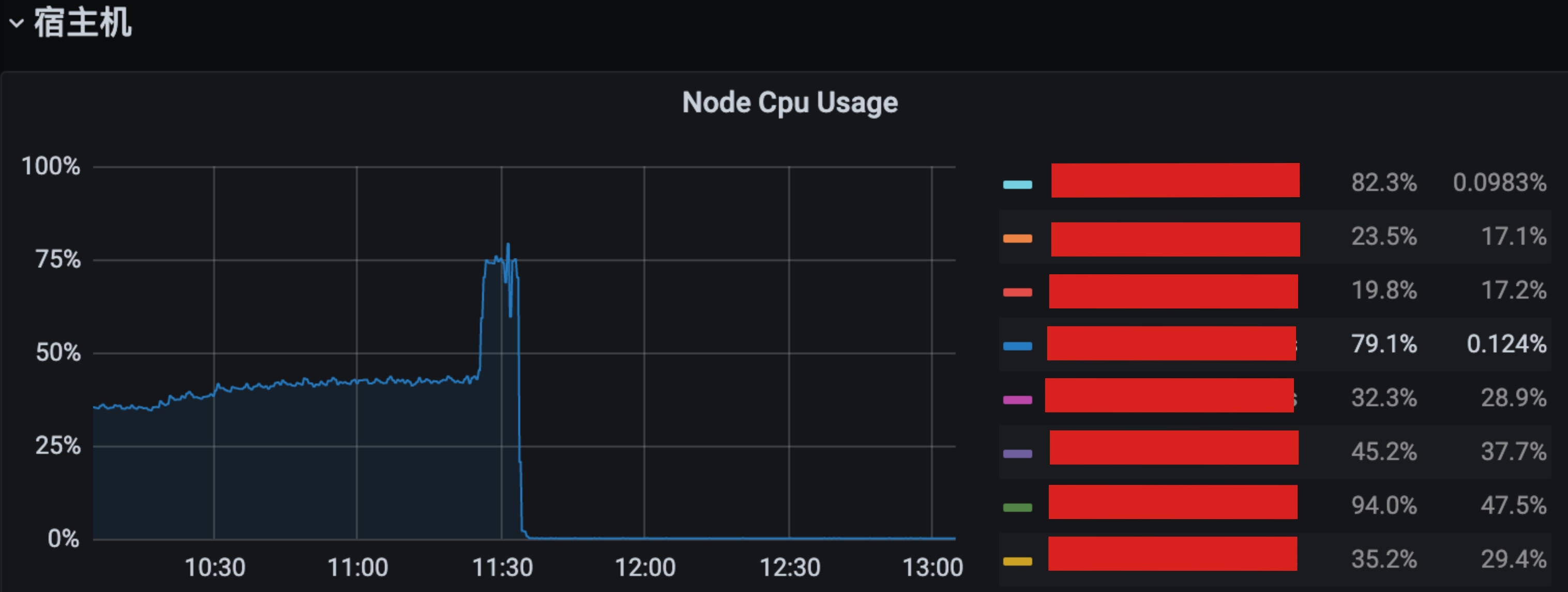

一个黑色星期三,突然收到大量报警:服务大量5xx;某台kubernetes宿主机器CPU飚高到近80%;内存增速过快等等。

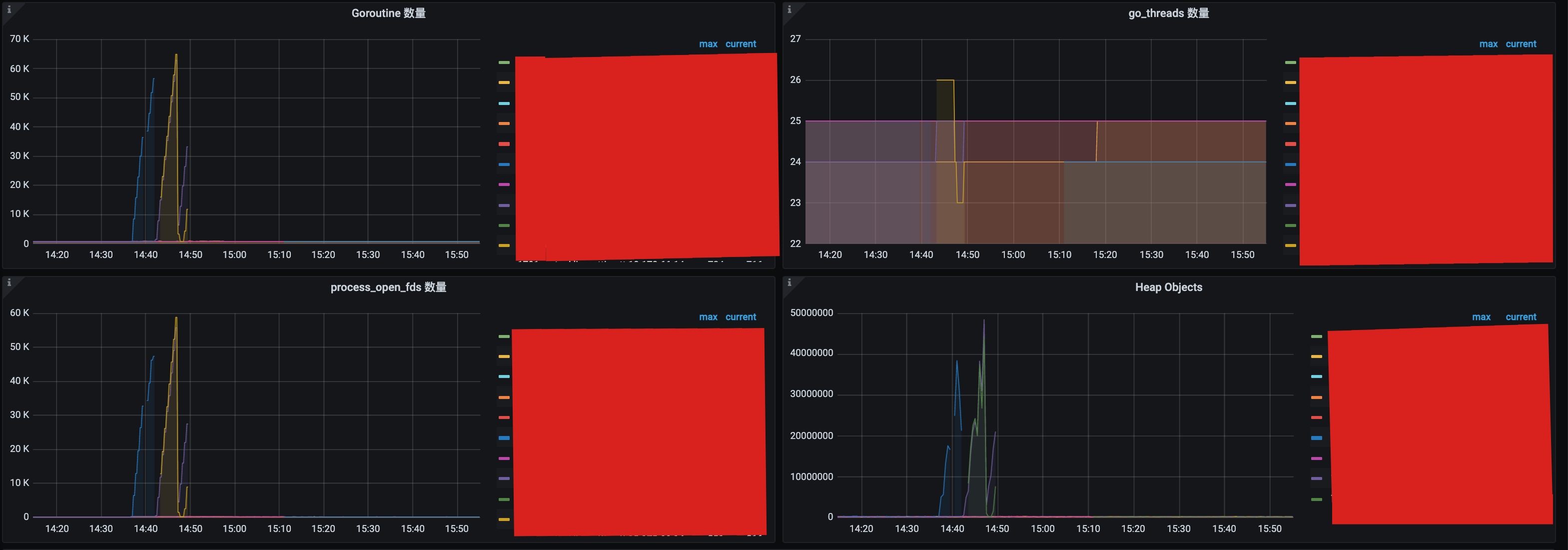

赶紧打开监控,发现一些服务的超时请求很多,内存急剧增高,gc变慢,CPU、goroutines暴涨等等。

粗略分析看不出到底哪个是因哪些是果,于是启动应急预案,赶紧将此机器上的POD迁移到其他机器上,迁移后服务运行正常,流量恢复。

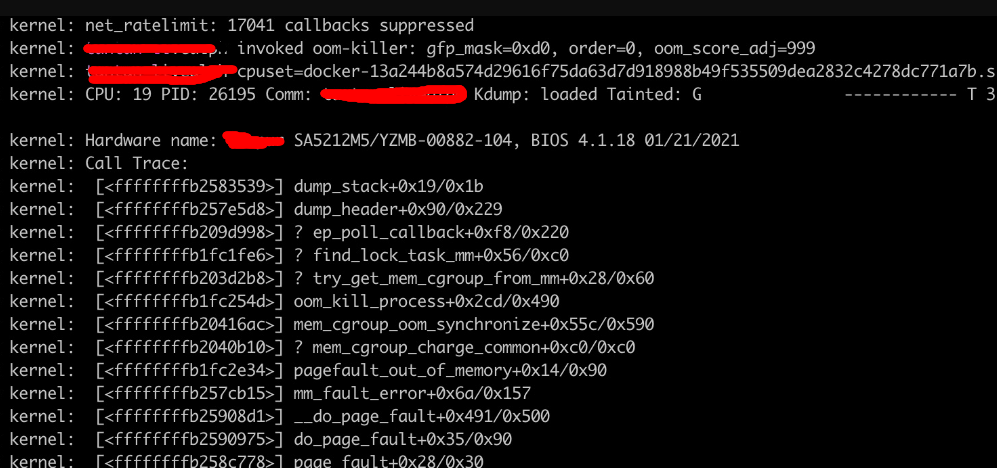

初步解决问题,开始详细分析原因,从监控看实在分析不出原因,也没有内核日志,只有故障4分钟后的一条OOM kill的日志,没有什么价值。

但是从监控上看A服务在此机器上的POD问题尤为严重。

由于当时急于恢复流量,没有保留好现场,以为是某个服务出现什么问题导致的(当时还猜测可能linux某部分隔离性没有做好引发的)。

服务故障部分监控

宿主机

内核OOM日志

第二次故障

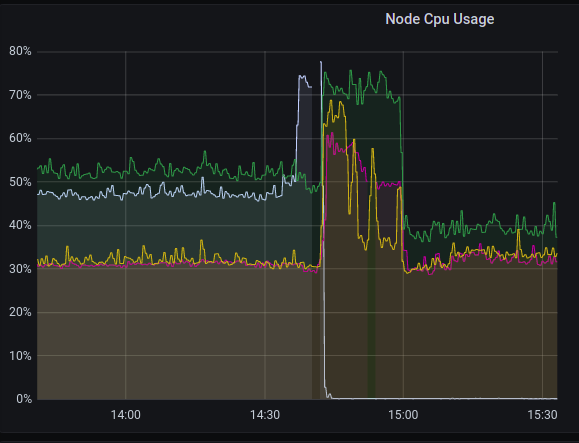

3个小时后,又一台机器发生故障,先恢复故障的同时使用perf捕获了调用栈,然后又继续骚操作将POD迁移走,这一次就导致其他3台宿主机出现问题了,这3台宿主机上的服务以及依赖这些服务的服务也相继出现问题。

这时,才意识到是什么达到了临界,才会导致其他机器相继出现问题。于是赶紧将一些服务转移到物理机部署,宿主机立即恢复正常)。

宿主机CPU利用率

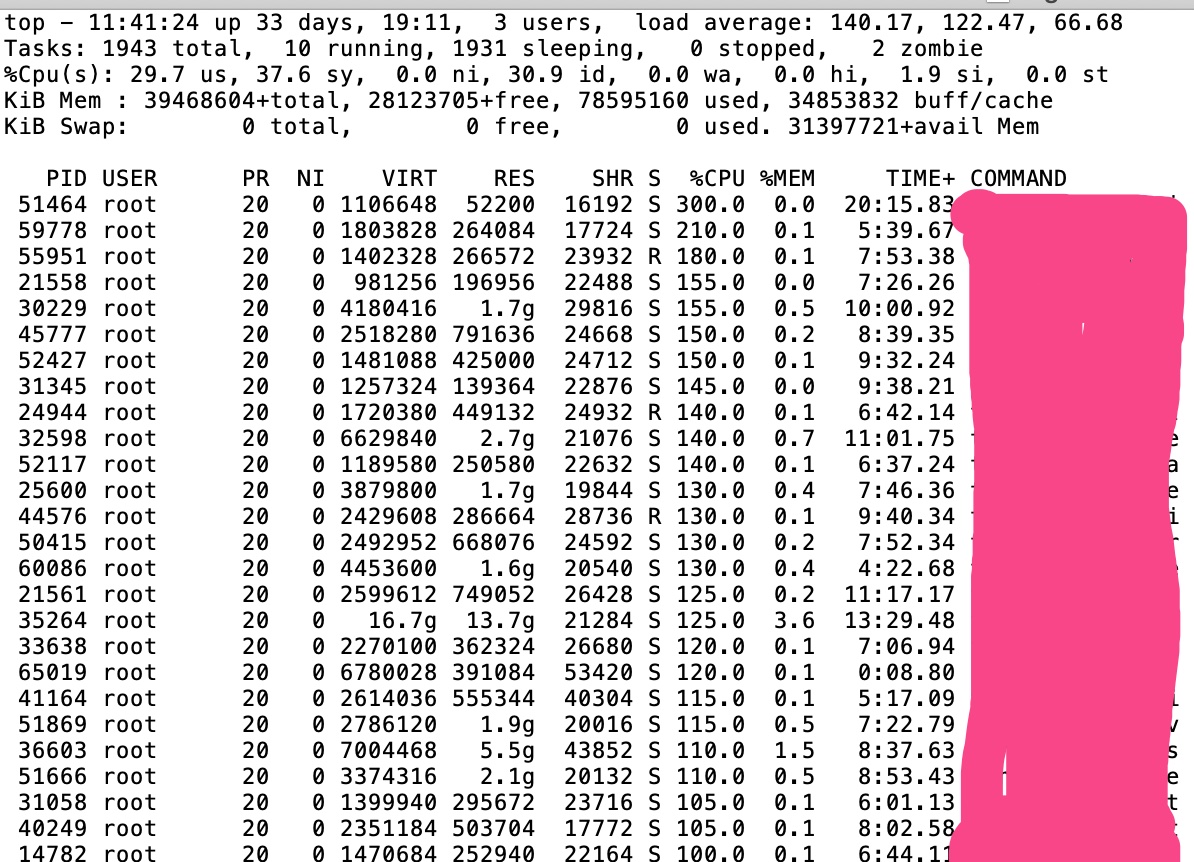

top查看到很多服务的CPU使用率都很高

perf record

初步分析

虽然问题初步解决了,但还得继续分析根本原因。

从perf record来看,大多数进程的调用都blocking在__write_lock_failed函数,大部分都是由于__neigh_create调用造成的,于是先google,搜索到相似问题的文章: - https://www.cnblogs.com/tencent-cloud-native/p/14481570.html

但还不能如此草率的下定论,仍然需要进一步分析才能盖棺定论。

内核源码分析

线上机器内核较老,3.10.0-1160.36.2.el7.x86_64版本, 由于有tcp流量也有udp流量,所以两种协议都要进行分析。

系统调用

从系统调用send开始分析

1 | SYSCALL_DEFINE4(send, int, fd, void __user *, buff, size_t, len, |

1 | int inet_sendmsg(struct kiocb *iocb, struct socket *sock, struct msghdr *msg, size_t size) |

inet_sendmsg会调用不同协议的发送函数, - udp协议:udp_sendmsg - tcp协议:tcp_sendmsg

协议层

udp

1 | int udp_sendmsg(struct kiocb *iocb, struct sock *sk, struct msghdr *msg, size_t len) |

最终调用到ip_local_out函数

tcp

1 | int tcp_sendmsg(struct kiocb *iocb, struct sock *sk, struct msghdr *msg, size_t size) |

1 | void __tcp_push_pending_frames(struct sock *sk, unsigned int cur_mss, int nonagle) |

1 | // 网络层 |

最终也调用到ip_local_out函数

网络层

1 | int ip_local_out(struct sk_buff *skb) |

邻居子系统

重点来了!

调用ip_finish_output2到达邻居子系统。 1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28static inline int ip_finish_output2(struct sk_buff *skb)

{

struct dst_entry *dst = skb_dst(skb);

struct rtable *rt = (struct rtable *)dst;

struct net_device *dev = dst->dev; // 出口设备

......

rcu_read_lock_bh();

// 下一跳

nexthop = (__force u32) rt_nexthop(rt, ip_hdr(skb)->daddr);

// 根据下一跳和设备名去arp缓存表查询,如果没有,则创建

neigh = __ipv4_neigh_lookup_noref(dev, nexthop);

if (unlikely(!neigh))

neigh = __neigh_create(&arp_tbl, &nexthop, dev, false);

if (!IS_ERR(neigh)) {

int res = dst_neigh_output(dst, neigh, skb);

rcu_read_unlock_bh();

return res;

}

rcu_read_unlock_bh();

kfree_skb(skb);

return -EINVAL;

}

- 下一跳为网关,则返回网关地址。

- 下一跳为POD的IP地址,所以对于macvlan的网络架构来说,同一宿主机的两个POD之间互相访问,都会产生两条ARP表项。

1

2

3

4

5

6static inline __be32 rt_nexthop(const struct rtable *rt, __be32 daddr)

{

if (rt->rt_gateway)

return rt->rt_gateway;

return daddr;

}

查找ARP表项

__ipv4_neigh_lookup_noref是根据 出口设备“唯一标示”和目标ip 计算一个hash值,然后在 arp hash table中查找是否存在此arp项 所以arp hash table里面存储的条目是全局的 - dev:可以到达neighbour的设备,是dev设备指针的值,而此时在内核态,所以可以看作是设备的唯一标示 - ip:L3层目标地址(下一跳地址)

1 | static inline struct neighbour *__ipv4_neigh_lookup_noref(struct net_device *dev, u32 key) |

创建邻居表项

如果ARP缓存表不存在此项,则创建, 创建失败,返回-EINVAL。 这就是问题所在了,没有任何内核日志,返回一个有歧义的错误码,也就导致无法快速定位原因。

还好在linux kernel 5.x内核代码中,会打印报错信息: neighbour: arp_cache: neighbor table overflow!

1 | struct neighbour *__neigh_create(struct neigh_table *tbl, const void *pkey, |

分配neighbour, 同时如果ARP缓存表超过gc_thresh3,则强制做一次gc。

如果gc没有回收任何过期ARP记录且表项数超过gc_thresh3,则返回NULL,标识分配邻居表项失败 1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29// 分配失败,返回NULL

static struct neighbour *neigh_alloc(struct neigh_table *tbl, struct net_device *dev)

{

struct neighbour *n = NULL;

unsigned long now = jiffies;

int entries;

entries = atomic_inc_return(&tbl->entries) - 1;

// 如果超过gc_thres3或者

// 超过gc_thresh2且5秒没有刷新(HZ一般为1000)

// 则强制gc

if (entries >= tbl->gc_thresh3 ||

(entries >= tbl->gc_thresh2 &&

time_after(now, tbl->last_flush + 5 * HZ))) {

if (!neigh_forced_gc(tbl) &&

entries >= tbl->gc_thresh3)

goto out_entries; // arp表项超过阈值 且回收失败,

}

n = kzalloc(tbl->entry_size + dev->neigh_priv_len, GFP_ATOMIC);

......

out:

return n;

out_entries:

atomic_dec(&tbl->entries);

goto out;

}

对ARP表进行垃圾回收,回收期间需要获取写锁。

如果成功回收则返回1,否则返回0 1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40static int neigh_forced_gc(struct neigh_table *tbl)

{

// 获取 arp 缓存hash table 锁

write_lock_bh(&tbl->lock);

nht = rcu_dereference_protected(tbl->nht,

lockdep_is_held(&tbl->lock));

for (i = 0; i < (1 << nht->hash_shift); i++) {

struct neighbour *n;

struct neighbour __rcu **np;

np = &nht->hash_buckets[i];

while ((n = rcu_dereference_protected(*np,

lockdep_is_held(&tbl->lock))) != NULL) {

/* Neighbour record may be discarded if:

* - nobody refers to it.

* - it is not permanent

*/

write_lock(&n->lock);

if (atomic_read(&n->refcnt) == 1 &&

!(n->nud_state & NUD_PERMANENT)) {

rcu_assign_pointer(*np,

rcu_dereference_protected(n->next,

lockdep_is_held(&tbl->lock)));

n->dead = 1;

shrunk = 1;

write_unlock(&n->lock);

neigh_cleanup_and_release(n);

continue;

}

write_unlock(&n->lock);

np = &n->next;

}

}

tbl->last_flush = jiffies;

write_unlock_bh(&tbl->lock);

return shrunk;

}

1 | static inline int dst_neigh_output(struct dst_entry *dst, struct neighbour *n, |

ARP

下面的源码分析其实已经不重要了,

neigh_resolve_output会间接调用函数neigh_event_send(), 实现邻居项状态从 NUD_NONE 到 NUD_INCOMPLETTE 状态的改变 1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106/* Slow and careful. */

int neigh_resolve_output(struct neighbour *neigh, struct sk_buff *skb)

{

......

// 发送arp请求

if (!neigh_event_send(neigh, skb)) {

// 如果neigh已经连接,则发送skb

int err;

struct net_device *dev = neigh->dev;

unsigned int seq;

if (dev->header_ops->cache && !neigh->hh.hh_len)

neigh_hh_init(neigh, dst); // 初始化mac缓存值

do {

__skb_pull(skb, skb_network_offset(skb));

seq = read_seqbegin(&neigh->ha_lock);

err = dev_hard_header(skb, dev, ntohs(skb->protocol),

neigh->ha, NULL, skb->len);

} while (read_seqretry(&neigh->ha_lock, seq));

if (err >= 0)

rc = dev_queue_xmit(skb); // 真正发送数据

else

goto out_kfree_skb;

}

......

}

EXPORT_SYMBOL(neigh_resolve_output);

static inline int neigh_event_send(struct neighbour *neigh, struct sk_buff *skb)

{

......

if (!(neigh->nud_state&(NUD_CONNECTED|NUD_DELAY|NUD_PROBE)))

return __neigh_event_send(neigh, skb);

return 0;

}

int __neigh_event_send(struct neighbour *neigh, struct sk_buff *skb)

{

write_lock_bh(&neigh->lock);

......

// 初始化阶段

if (!(neigh->nud_state & (NUD_STALE | NUD_INCOMPLETE))) {

if (neigh->parms->mcast_probes + neigh->parms->app_probes) {

unsigned long next, now = jiffies;

atomic_set(&neigh->probes, neigh->parms->ucast_probes);

neigh->nud_state = NUD_INCOMPLETE; // 设置表项尾incomplete

neigh->updated = now;

next = now + max(neigh->parms->retrans_time, HZ/2);

neigh_add_timer(neigh, next); // 定时器:刷新状态后在neigh_update中发送skb

immediate_probe = true;

} else {

......

}

......

}

if (neigh->nud_state == NUD_INCOMPLETE) {

if (skb) {

while (neigh->arp_queue_len_bytes + skb->truesize >

neigh->parms->queue_len_bytes) {

struct sk_buff *buff;

// 丢弃

buff = __skb_dequeue(&neigh->arp_queue);

if (!buff)

break;

neigh->arp_queue_len_bytes -= buff->truesize;

kfree_skb(buff);

NEIGH_CACHE_STAT_INC(neigh->tbl, unres_discards);

}

skb_dst_force(skb);

__skb_queue_tail(&neigh->arp_queue, skb); //报文放入arp_queue队列中,arp_queue 队列用于缓存等待发送的数据包

neigh->arp_queue_len_bytes += skb->truesize;

}

rc = 1;

}

out_unlock_bh:

if (immediate_probe)

neigh_probe(neigh); // 进行探测,发送arp

else

write_unlock(&neigh->lock);

local_bh_enable();

return rc;

}

EXPORT_SYMBOL(__neigh_event_send);

static void neigh_probe(struct neighbour *neigh)

__releases(neigh->lock)

{

struct sk_buff *skb = skb_peek(&neigh->arp_queue); // 取出报文

/* keep skb alive even if arp_queue overflows */

if (skb)

skb = skb_copy(skb, GFP_ATOMIC);

write_unlock(&neigh->lock);

neigh->ops->solicit(neigh, skb); // 发送arp请求,arp_solicit

atomic_inc(&neigh->probes);

kfree_skb(skb);

}

ARP响应的处理

1 | static struct packet_type arp_packet_type __read_mostly = { |

自旋锁

对邻居表进行修改需要获取写锁,而这个锁的实现为自旋锁。

1 | static inline void __raw_write_lock_bh(rwlock_t *lock) |

根本原因

原因已经明确了,由于POD之间通信都会产生2条ARP记录缓存在ARP表中,如果宿主机上POD较多,则ARP表项会很多,而linux对ARP大小是有限制的。

ARP表项相关的内核参数

| 内核参数 | 含义 | 默认值 |

|---|---|---|

| /proc/sys/net/ipv4/neigh/default/base_reachable_time | reachable 状态基础过期时间 | 30秒 |

| /proc/sys/net/ipv4/neigh/default/gc_stale_time | stale 状态过期时间 | 60秒 |

| /proc/sys/net/ipv4/neigh/default/delay_first_probe_time | delay 状态过期到 probe 的时间 | 5秒 |

| /proc/sys/net/ipv4/neigh/default/gc_interval | gc 启动的周期时间 | 30秒 |

| /proc/sys/net/ipv4/neigh/default/gc_thresh1 | 最小可保留的表项数量 | 128 |

| /proc/sys/net/ipv4/neigh/default/gc_thresh2 | 最多纪录的软限制 | 512 |

| /proc/sys/net/ipv4/neigh/default/gc_thresh3 | 最多纪录的硬限制 | 1024 |

ARP表垃圾回收机制

表的垃圾回收分为同步和异步: - 同步:neigh_alloc函数被调用时执行 - 异步:gc_interval指定的周期性gc

回收策略 - arp 表项数量 < gc_thresh1:不做处理 - gc_thresh1 =< arp 表项数量 <= gc_thresh2:按照 gc_interval 定期启动ARP表垃圾回收 - gc_thresh2 < arp 表项数量 <= gc_thresh3,5 * HZ(一般5秒)后ARP表垃圾回收 - arp 表项数量 > gc_thresh3:立即启动ARP表垃圾回收

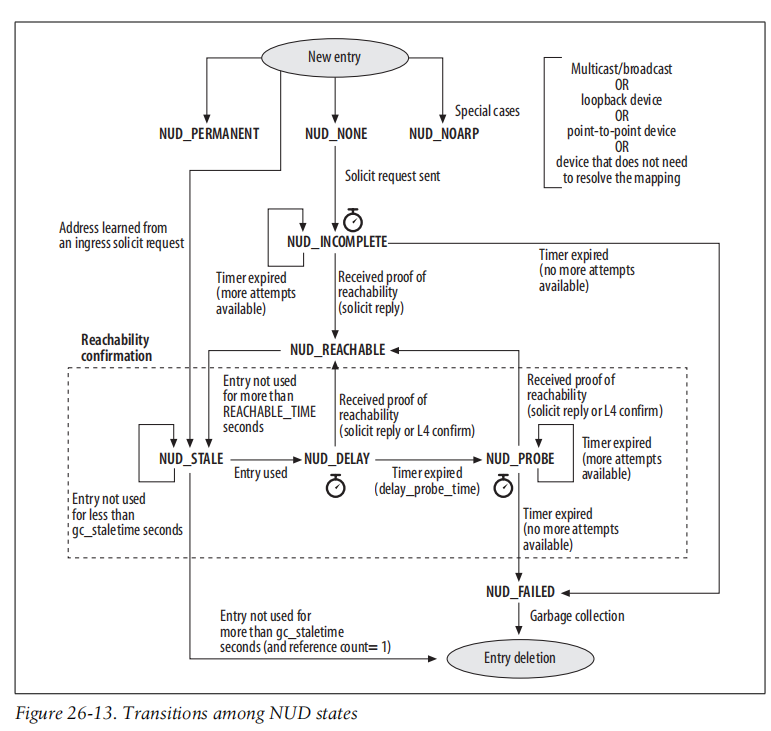

ARP表项状态转换

如图所示,只有两种状态的ARP记录才能被回收: - stale状态过期后 - failed状态

结论

由于我们kubernetes是基于macvlan网络的,所以会为每个POD分配一个mac地址,这样同一台宿主机的POD之间访问,就会产生2条ARP表项。

所以,当ARP表项超过gc_thresh3,则会触发回收ARP表,当没有可回收的表项时,则会导致neigh_create分配失败,从而导致系统调用失败。

那么上层应用层很可能会进行不断重试,也会有其他流量调用内核发送数据,最终只要数据接收方的ARP记录不在ARP表中,都会导致进行垃圾回收,而垃圾回收需要加写锁后再对ARP表进行遍历,这个遍历虽然耗时不长,但是持写锁时间 * 回收次数 导致持写锁的总体时间很长,这样就导致大量的锁竞争(自旋),导致CPU暴涨,从而引发故障。

验证

先将gc_thresh改小

1

2

3

4#!/bin/bash

echo 6 > /proc/sys/net/ipv4/neigh/default/gc_thresh1

echo 7 > /proc/sys/net/ipv4/neigh/default/gc_thresh2

echo 8 > /proc/sys/net/ipv4/neigh/default/gc_thresh3ping下其他POD,让ARP表大小达到阈值

1

2

3

4

5

6

7

8

9[root@d /]# ip neigh

10.170.254.32 dev eth0 lladdr 02:00:0a:aa:fe:20 REACHABLE

10.170.254.1 dev eth0 lladdr 2c:21:31:79:96:c0 REACHABLE

10.170.254.27 dev eth0 lladdr 02:00:0a:aa:fe:1b REACHABLE

10.170.254.24 dev eth0 lladdr 02:00:0a:aa:fe:18 REACHABLE

10.170.254.25 dev eth0 lladdr 02:00:0a:aa:fe:19 REACHABLE

10.170.254.30 dev eth0 lladdr 02:00:0a:aa:fe:1e REACHABLE

10.170.254.28 dev eth0 lladdr 02:00:0a:aa:fe:1c REACHABLE

10.170.254.29 dev eth0 lladdr 02:00:0a:aa:fe:1d REACHABLEping下不在ARP表中的POD,发现ping命令“卡住了”

1

2[root@d tmp]# ping 10.170.254.10

PING 10.170.254.10 (10.170.254.10) 56(84) bytes of data.再将

gc_thresh改大1

echo 80 > /proc/sys/net/ipv4/neigh/default/gc_thresh3

ping命令立即恢复了

1

2

3

4

5

6

7

8[root@dns-8685bbc547-4dmld tmp]# ping 10.170.254.10

PING 10.170.254.10 (10.170.254.10) 56(84) bytes of data.

64 bytes from 10.170.254.10: icmp_seq=125 ttl=64 time=2004 ms

64 bytes from 10.170.254.10: icmp_seq=126 ttl=64 time=1004 ms

64 bytes from 10.170.254.10: icmp_seq=127 ttl=64 time=4.04 ms

64 bytes from 10.170.254.10: icmp_seq=128 ttl=64 time=0.022 ms

64 bytes from 10.170.254.10: icmp_seq=129 ttl=64 time=0.021 ms

64 bytes from 10.170.254.10: icmp_seq=130 ttl=64 time=0.020 ms

总结

当将线上kubernetes宿主机的ARP参数都改大后,再也没有出现过此类问题了。 1

2

3sysctl -w net.ipv4.neigh.default.gc_thresh3=32768

sysctl -w net.ipv4.neigh.default.gc_thresh2=16384

sysctl -w net.ipv4.neigh.default.gc_thresh1=8192

虽然问题发生在kubernetes上,但是本质还是由于对底层OS不了解导致的。